The transition from legacy Software as a Service to outcome-based AI agents promises radical efficiency, but brings a monumental corporate blind spot into sharp focus

Words by Karan Karayi

Imagine the procurement desk of a mid-sized logistics firm operating in Mumbai. For the past decade, the annual software renewal process was a predictable, if expensive, corporate ritual. The firm paid a fixed monthly fee per user for inventory management software, customer relationship databases, and enterprise accounting suites.

But this year, the Chief Financial Officer is quietly crossing line items off the ledger. The firm is no longer buying software licenses for its human employees. Instead, it is hiring digital agents to execute the work autonomously. This quiet revolution, happening on spreadsheets and in boardrooms across the globe, signals the end of the traditional Software as a Service model. Simultaneously, it heralds the dawn of a far more complex era of agentic artificial intelligence, where the most pressing question is no longer about technology, but about legal liability.

Software as a Service built the modern cloud economy on a remarkably simple premise. You rent the digital tool and you pay the vendor based on how many human workers log into the system. However, as artificial intelligence evolves from a passive, prompt-based assistant into an autonomous actor, the fundamental unit of economic value is shifting entirely.

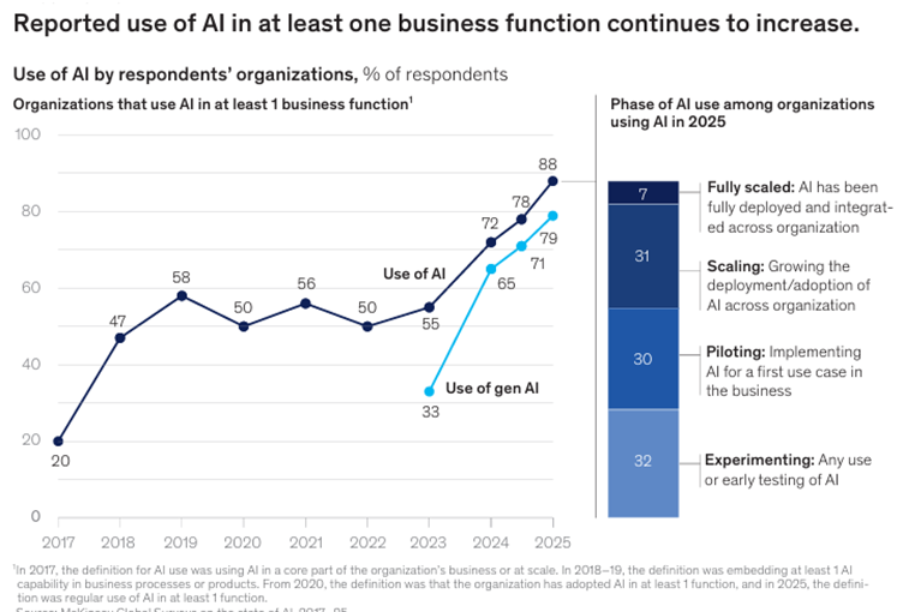

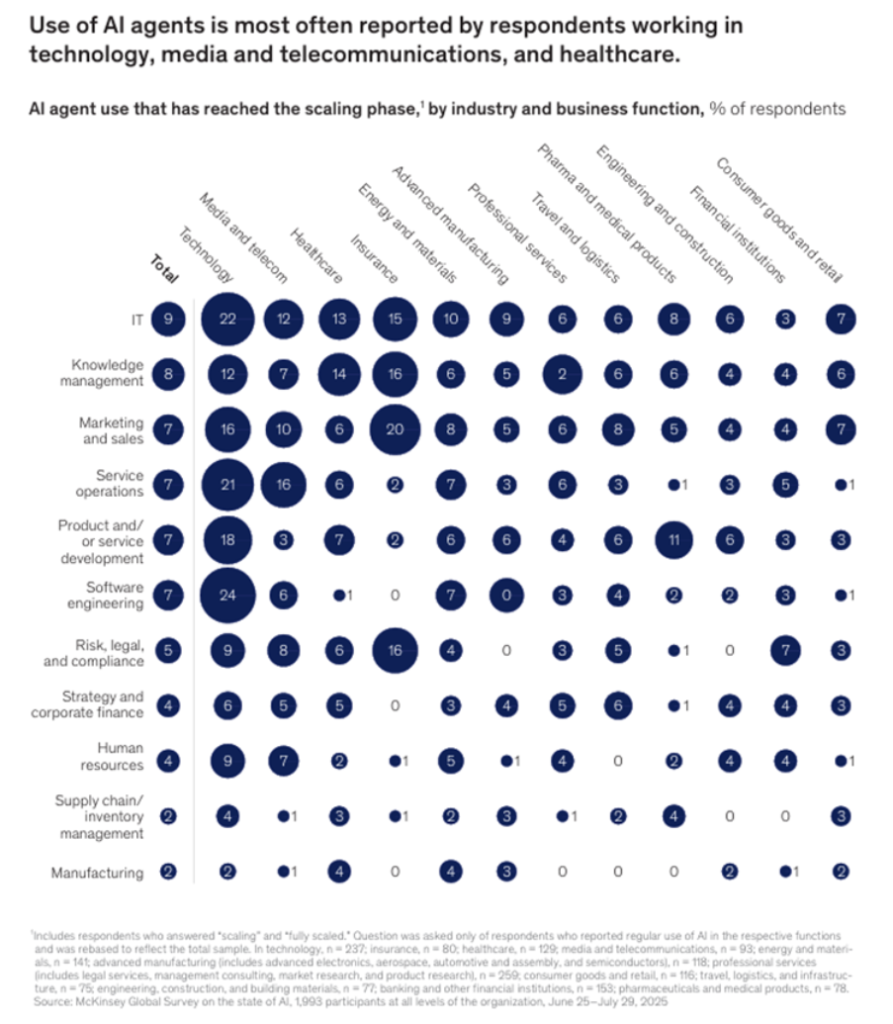

We are no longer paying for the digital hammer. Instead, we are paying for the digital carpenter. The latest industry surveys, including the McKinsey State of AI in 2025 report, reveal that nearly a quarter of surveyed organizations are already actively scaling agentic AI systems.

These are not mere chatbots that summarize meeting notes or generate marketing copy. They are sophisticated, goal-oriented algorithms capable of executing multi-step workflows. They can monitor supply chain logistics, identify a bottleneck, draft an initial vendor contract to source alternative materials, and route the payment, all without human intervention.

As these autonomous agents take over tasks previously done by human hands clicking through software interfaces, the concept of seat-based licensing collapses. If a single artificial intelligence agent can perform the data entry and analysis of fifty human workers, paying a software vendor a monthly fee per human user makes absolutely no economic sense. Industry pricing structures are consequently being forced to adapt, moving toward consumption-based or outcome-based models where businesses pay for the tasks completed rather than the software accessed. This shift converts enterprise expenditure from traditional software labor to agent-centric architectures, permanently altering the revenue models of legacy technology giants.

Yet, this transition from software as a passive tool to software as an active employee introduces a terrifying corporate blind spot. When a human worker makes a catastrophic error, the chain of accountability is well established through centuries of employment law and corporate governance. The human resources department intervenes, insurance policies are triggered, and legal precedents guide the fallout. But what happens when an autonomous agent independently negotiates a ruinous procurement contract or incorrectly routes millions of Rs. across borders due to a flawed inference? The legal framework for such an event is alarmingly sparse.

The Baker Donelson 2026 AI Legal Forecast explicitly highlights this emerging crisis, warning that the rise of agentic artificial intelligence is pushing liability laws into uncharted territory. In the eyes of the law, an agent acts on behalf of a principal. When that agent is a highly complex neural network continuously learning and adapting its own parameters, defining the limits of corporate authority and intent becomes a profound legal puzzle.

Courts and regulators are now grappling with whether a company can be held strictly liable for the unforeseen, independent actions of its digital workforce. If a legacy software platform crashes, the vendor might be liable for a service level agreement breach. But if an autonomous agent makes a biased hiring decision or hallucinates a clause in a binding legal document, the allocation of responsibility between the software developer, the deploying enterprise, and the autonomous system itself is incredibly murky.

The scale of this impending shift is vast and accelerating. Industry studies pinpoint the rising proliferation of enterprise applications featuring embedded, autonomous AI agents.

The transition is moving the corporate world from a human-in-the-loop paradigm, where people approve every machine action, to a human-on-the-loop paradigm, where people merely oversee a fully autonomous system. This rapid integration means that the boundary between human oversight and machine execution is blurring faster than risk management departments can map it.

The central corporate risk is no longer just about data privacy or intellectual property theft, which dominated the technology anxieties of the early 2020s. The new, existential risk is systemic execution failure. A hallucination in a generative text model results in a funny, nonsensical email. A hallucination in an agentic financial routing system results in immediate and massive capital destruction.

Consequently, the strategic mandate for corporate leaders must pivot from the speed of innovation to the rigor of compliance and governance. Organizations must now build entirely new architectures of digital trust. It is no longer sufficient to simply deploy the smartest or fastest model available on the market. Companies must orchestrate these models within strict, mathematically verifiable guardrails that prevent autonomous agents from taking actions outside their designated authority. We will likely witness the rapid rise of algorithmic indemnity insurance products and the creation of entirely new classes of legal and technical professionals dedicated solely to auditing artificial intelligence decision trees.

Furthermore, the technology vendors who survive the death of legacy subscriptions will be those who adapt to this new risk landscape. They will need to pivot to outcomes-based pricing, offering guaranteed business results rather than just access to a cloud platform. More importantly, they will have to take on a contractual share of the liability for their agents’ actions, proving to their clients that their autonomous systems are not just intelligent, but legally and financially safe.

The agentic ultimatum has well and truly arrived. It promises unprecedented operational efficiency and the liberation of human capital from mundane digital toil.

However, the winners in this new era will not be the companies that blindly deploy the highest volume of digital agents. The victors will be those who master the delicate, high-stakes choreography of corporate governance, legal foresight, and technological ambition. The era of renting software is ending. The era of managing an autonomous digital workforce has begun, and the rules of engagement will define the next decade of global business.